If you’ve been reading about VPNs, you’ve almost certainly come across OpenVPN. It’s been the industry’s gold standard for over two decades — battle-tested, highly secure, and trusted by security professionals worldwide.

But for a long time, it had one frustrating weakness: speed.

OpenVPN was noticeably slower than newer protocols like WireGuard, and the honest answer to “why?” was that OpenVPN was built in a way that made raw performance hard to achieve.

Then came DCO.

This article explains exactly what that means, why it matters, and whether you need to do anything to benefit from it. No sysadmin experience required.

What is OpenVPN DCO? (The Short Answer)

OpenVPN DCO (Data Channel Offload) is a performance upgrade to the OpenVPN protocol that moves data encryption out of your VPN app and into the core of your operating system — delivering speeds 2x to 10x faster than traditional OpenVPN with no change to its security. It ships built-in with OpenVPN 2.6 and Linux kernel 6.16, and most commercial VPN providers are already running it on their servers.

Why Was OpenVPN Slow in the First Place?

To understand DCO, you first need a quick mental picture of how your computer is organised internally. Stick with this analogy and everything else will click.

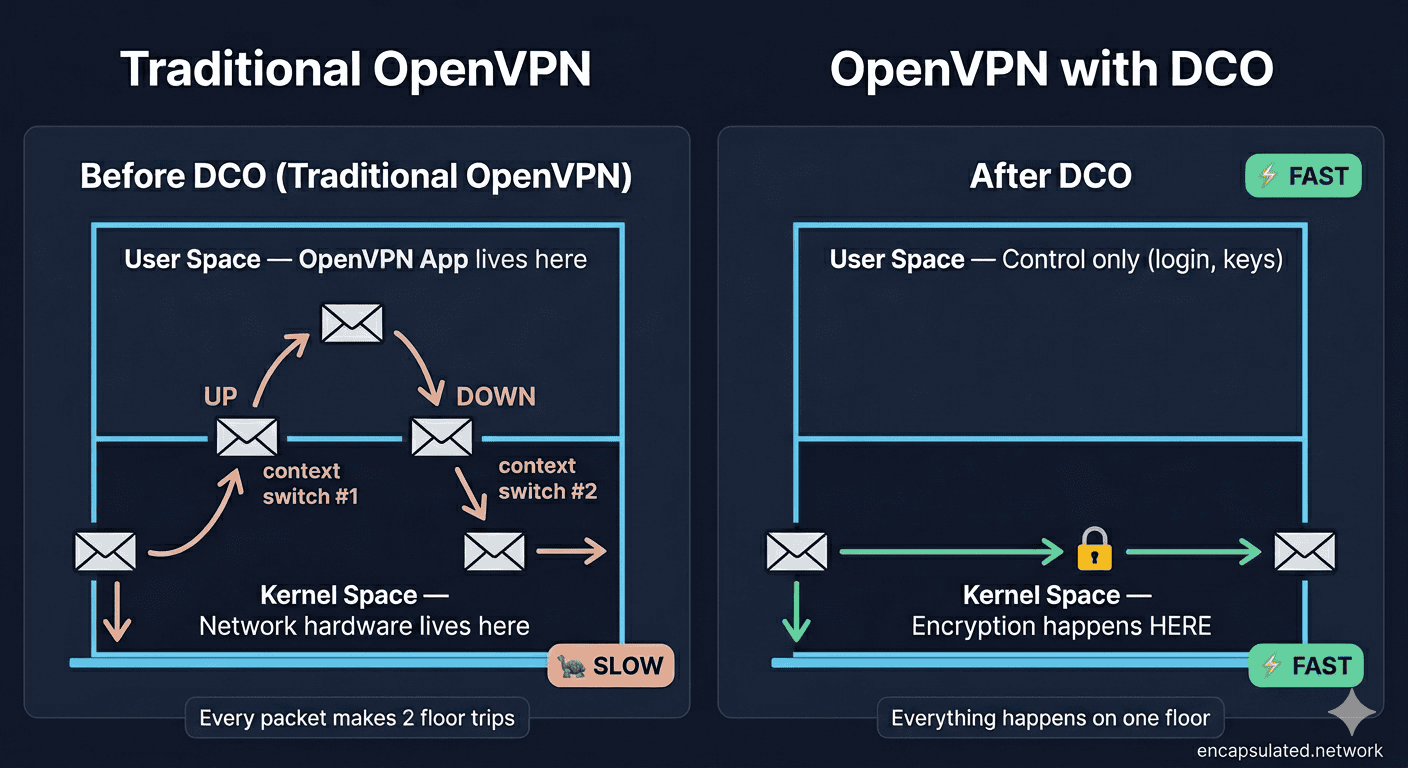

The Two Floors of Your Computer

Think of your computer as a building with two floors.

The ground floor is called kernel space. This is where the operating system lives — the core software that talks directly to your hardware, manages memory, and controls all network traffic. It’s fast, tightly controlled, and efficient.

The upper floor is called user space. This is where all your regular applications live — your browser, your email client, your VPN app. It’s flexible and comfortable, but it’s physically further from the hardware. Any time an app on the upper floor needs something from the hardware, it has to send a request down to the ground floor and wait for a response.

Here’s the problem: traditional OpenVPN lived entirely on the upper floor.

The Ping-Pong Problem

When encrypted VPN traffic arrives on your device, it starts on the ground floor (kernel space). But because OpenVPN ran in user space, every single data packet had to make a round trip:

- Packet arrives in kernel space (ground floor)

- Gets sent up to OpenVPN in user space (upper floor) for decryption

- Travels back down to kernel space to be forwarded on

This constant shuttle service — called context switching — happens for every single packet your VPN handles. On a fast connection pushing thousands of packets per second, that overhead adds up fast. The result: slower speeds, higher CPU usage, and a hard performance ceiling that WireGuard — which was built to live entirely on the ground floor — simply didn’t have.

What Does OpenVPN DCO Actually Do?

DCO fixes this by permanently moving the data-handling work down to the ground floor. But to understand how it works cleanly, it helps to know that a VPN connection is actually two separate things running at the same time.

Brain vs. Brawn — The Control Plane and the Data Plane

Every OpenVPN connection has two distinct jobs:

- The Control Plane — the “brain.” This handles authentication, key negotiation, and session management. It needs flexibility and complex logic. It stays in user space.

- The Data Plane — the “brawn.” This handles the actual encryption and decryption of your data packets. It’s repetitive, high-volume, and speed-critical.

DCO separates these two jobs cleanly. The control plane stays where it has always been — in user space, handled by OpenVPN as normal. The data plane moves permanently into kernel space.

The Fast Path

Once DCO is active, the packet journey looks completely different:

- Packet arrives in kernel space

- Gets encrypted or decrypted in kernel space — no round trip needed

- Gets forwarded from kernel space directly

No more shuttle trips. No copying data back and forth. The bottleneck is gone.

DCO also adds a second major improvement: multi-threading. Traditional OpenVPN could only use one CPU core at a time, leaving the rest of your processor idle. With DCO, encryption work is spread across multiple cores simultaneously — which means your hardware is finally working at its full potential.

What Does This Mean for You? The Real-World Benefits

Significantly Faster Speeds

The performance gains from DCO are not subtle. Depending on your hardware and connection, you can expect throughput improvements of 2x to 10x over traditional OpenVPN:

- A Raspberry Pi 4 running traditional OpenVPN typically maxes out around 120 Mbps. With DCO, the same hardware can push 400–500 Mbps.

- On mid-range x86 servers, OpenVPN used to cap out around 300–400 Mbps. With DCO, exceeding 1 Gbps is achievable.

- ExpressVPN recorded up to a 2,000% increase in performance on UDP traffic after announcing their DCO implementation in March 2025.

- Norton VPN reported that connection speeds more than doubled and latency fell by 15% after integrating DCO in September 2025.

In plain terms: if you have a gigabit fibre connection, OpenVPN with DCO can actually use it. Traditional OpenVPN often couldn’t.

Lower Latency for Gaming and Video Calls

Faster throughput is only part of the story. Because DCO eliminates the ping-pong effect described above, it also reduces latency (the delay between sending and receiving data) and jitter (inconsistency in that delay).

If you use a VPN while gaming, on video calls, or over VoIP services, you’ll notice this directly — connections feel snappier, calls stay stable, and the lag that made VPN gaming frustrating is significantly reduced.

Better Battery Life

This one surprises people. Because DCO eliminates the constant context switching between kernel and user space, your CPU has to work much less to maintain an encrypted connection. On a laptop or mobile device, less CPU work means less heat and longer battery life — a genuine everyday benefit that goes beyond just speed numbers. For a full breakdown of what drives VPN battery drain on phones — and five settings that reduce it — see our guide on VPN battery drain on mobile.

Is OpenVPN DCO Secure?

This is the first question any privacy-conscious reader should ask, and the answer is clear: yes, DCO is secure.

Same Encryption, New Location

DCO changes where encryption happens, not how. The same rigorously vetted cryptographic algorithms — AES-256-GCM, AES-128-GCM, and ChaCha20-Poly1305 — are used whether DCO is enabled or not. Your data is protected just as strongly either way.

Modern Ciphers Only — and That’s a Good Thing

DCO does require that you use one of those three modern ciphers. Older ciphers like AES-256-CBC won’t work with DCO enabled. In practice, this is not a loss — those older ciphers have known weaknesses, and any well-configured VPN should already be using AES-GCM or ChaCha20. DCO effectively enforces best practice.

What About Kernel-Level Bugs?

Moving code into the kernel does raise a fair question: what happens if there’s a bug? A vulnerability in kernel space is potentially more serious than one in user space.

The OpenVPN team designed the DCO module with this in mind. The kernel module is intentionally kept “slim” — it handles only the repetitive data work, with all complex logic remaining in user space where it can be audited and patched more easily.

Early DCO versions did have issues — CVE-2025-50054, a buffer overflow vulnerability in the Windows DCO driver (ovpn-dco-win), was discovered and published in June 2025. Importantly, this affected the Windows implementation specifically, not the Linux kernel module. A fix was included in OpenVPN 2.7_alpha2. The fact that it was found, disclosed responsibly, and patched is a sign of a mature, actively maintained security project — not a reason for alarm. Keeping your VPN app up to date is the only precaution needed.

The Trade-Offs — What DCO Doesn’t Support

DCO is a major improvement, but it’s not universal. There are a few situations where it doesn’t apply.

UDP and TCP Support — What’s Changed

The original out-of-tree DCO module (ovpn-dco) only supported UDP. However, the mainline ovpn module — merged into Linux kernel 6.16 in April 2025 — supports both UDP and TCP, including the all-important TCP port 443 that lets OpenVPN bypass restrictive firewalls.

In practice, this means: if you’re on a modern server running kernel 6.16+, DCO works whether you’re on UDP or TCP. If you’re on an older out-of-tree setup, TCP connections still fall back gracefully to standard OpenVPN processing. Either way, your connection remains secure — the difference is only in performance.

No Compression — and Here’s Why That’s Actually Good

DCO does not support OpenVPN’s legacy compression features (LZO and LZ4). This sounds like a downside until you understand why: those compression methods are vulnerable to the VORACLE attack, a class of cryptographic exploit that can leak information when compression is combined with encryption. Disabling compression is the right call for security, and on modern networks with fast connections, compression provides no meaningful speed benefit anyway. DCO simply makes this best practice the only option.

System Requirements

DCO comes in two forms, each with different requirements:

- Mainline kernel module (

ovpn) — built into Linux kernel 6.16+. No extra installation needed. This is what most modern servers will use going forward. - Out-of-tree module (

ovpn-dco) — requires Linux kernel 5.2+ and manual installation. Works on kernels between 5.2 and 6.15 where the mainline module isn’t yet available. - OpenVPN 2.6 or later — required on the server. Your client doesn’t need to be on 2.6+ to benefit; a DCO-enabled server alone provides performance improvements for all connecting clients.

- Windows 11 (or Windows 10) — DCO is included in the OpenVPN Connect app for Windows via a separate driver (

ovpn-dco-win). - macOS — DCO support on macOS is still in development and not yet fully available. Mac users connecting to DCO-enabled servers will benefit from server-side improvements, but the full client-side DCO experience isn’t there yet.

- FreeBSD / pfSense — FreeBSD has its own kernel DCO module (

if_ovpn), actively maintained and used in pfSense deployments.

Some consumer routers running older firmware — including certain Asus models running AsusWRT — are on Linux 4.x kernels and cannot use DCO. If you run a self-hosted VPN on a router, check your kernel version before expecting DCO support.

OpenVPN DCO vs. WireGuard — Where Does the Debate Stand Now?

If you’ve read our WireGuard vs. OpenVPN comparison, you’ll know that WireGuard’s biggest concrete advantage was speed — specifically, the fact that it ran in kernel space while OpenVPN didn’t. DCO directly addresses that.

How DCO Closes the Speed Gap

With DCO, OpenVPN now operates on the same architectural level as WireGuard for data handling. According to GL.iNet’s own product specifications for their 2026 travel routers, OpenVPN with DCO achieved 700 Mbps versus WireGuard’s 600 Mbps on the same hardware — a manufacturer-reported figure, but one consistent with the architectural gains DCO delivers. That result would have been unthinkable two years ago.

Where WireGuard Still Has the Edge

DCO doesn’t make every aspect of OpenVPN as fast as WireGuard. WireGuard still has advantages in:

- Mobile reconnections — WireGuard handles switching between networks (Wi-Fi to mobile data) more gracefully

- Handshake speed — initial connection setup is faster on WireGuard

- Codebase simplicity — WireGuard’s ~4,000 lines of code is far easier to audit than OpenVPN’s larger codebase

The Best of Both Worlds

Here’s what DCO actually unlocks for OpenVPN that WireGuard still can’t match: OpenVPN can use TCP on port 443, which looks identical to normal HTTPS traffic. On highly restrictive networks that block everything except standard web traffic, OpenVPN with TCP 443 connects when WireGuard can’t.

With DCO, you now get WireGuard-level performance on unrestricted networks, plus OpenVPN’s unmatched ability to bypass firewalls when you need it. That combination is genuinely new, and it changes the calculus of the WireGuard vs. OpenVPN debate significantly.

OpenVPN Legacy vs. OpenVPN DCO vs. WireGuard — Quick Comparison

| Feature | OpenVPN (Legacy) | OpenVPN + DCO | WireGuard |

|---|---|---|---|

| Runs in kernel space | ❌ No | ✅ Data plane yes | ✅ Yes |

| Max throughput (mid-range hardware) | ~400 Mbps | 1 Gbps+ | 1 Gbps+ |

| Multi-threaded encryption | ❌ No | ✅ Yes | ✅ Yes |

| TCP port 443 support | ✅ Yes | ✅ Yes | ❌ No |

| Works on restrictive networks | ✅ Best in class | ✅ Best in class | ⚠️ Limited |

| Mobile reconnection speed | ⚠️ Moderate | ⚠️ Moderate | ✅ Excellent |

| Compression support | ✅ Yes (LZO/LZ4) | ❌ No (security) | ❌ No |

| Codebase size | Large | Large | ~4,000 lines |

| Kernel maturity | ✅ 20+ years | ✅ 20+ years | ✅ In kernel since 2020 |

Which VPN Services Already Use DCO?

The good news: if you use a mainstream commercial VPN, there’s a strong chance DCO is already running on the servers you connect to.

- Windscribe — integrated DCO in March 2025

- Norton VPN — integrated DCO in September 2025

- ExpressVPN — announced DCO implementation in March 2025

Many other providers haven’t made public announcements but are running it quietly. In April 2025, DCO was merged into the Linux kernel mainline (shipped in Linux 6.16), which means any VPN provider running a modern Linux server has the capability built in at the OS level. If their OpenVPN version is 2.6 or later, they’re almost certainly using it.

Do You Need to Do Anything?

If You Use a Commercial VPN

Almost certainly not. If your VPN app is up to date and you’re connecting to a provider running OpenVPN 2.6+ on modern servers, DCO is already active on the server side. Keep your app updated and you’re done.

If You Self-Host OpenVPN

You’ll want to check a few things:

- Ensure your server is running OpenVPN 2.6 or later — note that only the server needs DCO for performance improvements to kick in; all connecting clients benefit even if their client app doesn’t explicitly support DCO

- Confirm your server’s Linux kernel version (

uname -rin terminal) — kernel 6.16+ includes the built-inovpnmodule; kernels 5.2–6.15 can use the out-of-treeovpn-dcomodule with manual installation. For the complete OpenVPN client setup on Linux — including both the modern OpenVPN 3 client and the legacy 2.x systemd service approach — see our Linux VPN setup guide. - In your server config, add or confirm:

data-ciphers AES-256-GCM:AES-128-GCM:CHACHA20-POLY1305 - In the OpenVPN Connect app on Windows, go to Settings → Advanced Settings → Enable DCO

How to Verify DCO Is Active

Once connected, check your OpenVPN connection logs. The identifier depends on which module is running:

- On kernel 6.16+ (mainline module): look for

ovpnin the log output - On kernel 5.2–6.15 (out-of-tree module): look for

ovpn-dcoorovpn-dco-v2

If you see the traditional tun interface instead of either of those, DCO is not active — which usually means the server or client doesn’t meet the requirements above.

Frequently Asked Questions

What does DCO stand for in OpenVPN?

DCO stands for Data Channel Offload. It refers to moving the data encryption and decryption work in OpenVPN from user space (where applications run) into kernel space (the core of the operating system), which is significantly faster and more efficient.

Does OpenVPN DCO change how secure my VPN is?

No. DCO changes where encryption happens, not how. The same strong encryption algorithms — AES-256-GCM and ChaCha20-Poly1305 — are used with or without DCO active. Your privacy and data protection are completely unaffected.

Is OpenVPN DCO faster than WireGuard?

On many hardware configurations, yes — or at minimum, very close. Benchmarks from GL.iNet’s 2026 routers show OpenVPN DCO achieving 700 Mbps versus WireGuard’s 600 Mbps on the same device. On other hardware the results are neck and neck. WireGuard still has advantages in reconnection speed on mobile networks, but the raw throughput gap has closed entirely.

Why doesn’t OpenVPN DCO support compression?

DCO deliberately drops support for LZO and LZ4 compression because of the VORACLE attack — a cryptographic exploit that can leak information when compression is combined with encryption. Disabling compression is the secure choice. On modern high-speed connections, compression provides no meaningful benefit anyway.

Do I need to enable DCO manually?

If you use a commercial VPN service with an up-to-date app, no — DCO is handled automatically on the server side, and even your client benefits without any changes. If you self-host OpenVPN, ensure you’re on OpenVPN 2.6+ with a server running Linux kernel 6.16+ (mainline) or 5.2+ with the out-of-tree module. On Windows, there’s a toggle in the OpenVPN Connect app under Advanced Settings.

Which VPN services use OpenVPN DCO?

Confirmed deployments include ExpressVPN, Windscribe, and Norton VPN. Many other providers are running it without a formal announcement. Any provider running OpenVPN 2.6+ on a server with Linux kernel 6.16+ has DCO built in natively — which covers the majority of major commercial VPN services on modern infrastructure.

Conclusion: The Future of Privacy is Fast

OpenVPN DCO isn’t a minor patch or a performance tweak — it’s a fundamental architectural reimagining of how OpenVPN handles your data. By moving encryption to the kernel, adding multi-threading, and eliminating the constant back-and-forth between floors, DCO brings OpenVPN’s performance into the same tier as WireGuard while keeping everything that made OpenVPN the industry standard in the first place: its security, its auditability, and its unmatched ability to work on restrictive networks.

For most people, it’s already running in the background. For those configuring their own servers, it’s a version number and a config line away.

Either way, the era of “OpenVPN is secure but slow” is over.

If you want to understand the bigger picture of how VPN protocols fit together, start with how a VPN actually works. Or if you’re still deciding between OpenVPN and WireGuard, our full WireGuard vs. OpenVPN comparison covers everything you need to make the right call for your situation.